TL;DR: Starting over every time isn’t just annoying—it’s a power problem, and owning your context changes that.

You know what’s annoying? Starting over.

Every. Single. Time.

You’re working with an AI on something. Maybe it’s a project, a piece of writing, debugging code – whatever. You get into a rhythm. The AI starts to understand what you’re trying to do. You’ve built shared context: your goals, your style, the weirdly specific requirements of your project.

And then you close the chat.

Next time you come back? Gone. All of it. You’re strangers again.

The AI doesn’t remember you. It doesn’t remember what you were building. It doesn’t remember that conversation where you explained exactly why things need to be done a certain way. You have to start from scratch. Re-explain everything, rebuild that context from the ground up.

This isn’t just annoying. It’s a fundamental problem with how we’re co-evolving with AI right now.

What “Stateless” Actually Means

Here’s the technical bit you need to know, but I’ll explain it like a human: most AI systems are stateless. That means they have no memory between conversations.

Think of it like working with someone who has amnesia that resets every time you leave the room. It doesn’t matter how long you worked together yesterday. It doesn’t matter what you built or figured out.

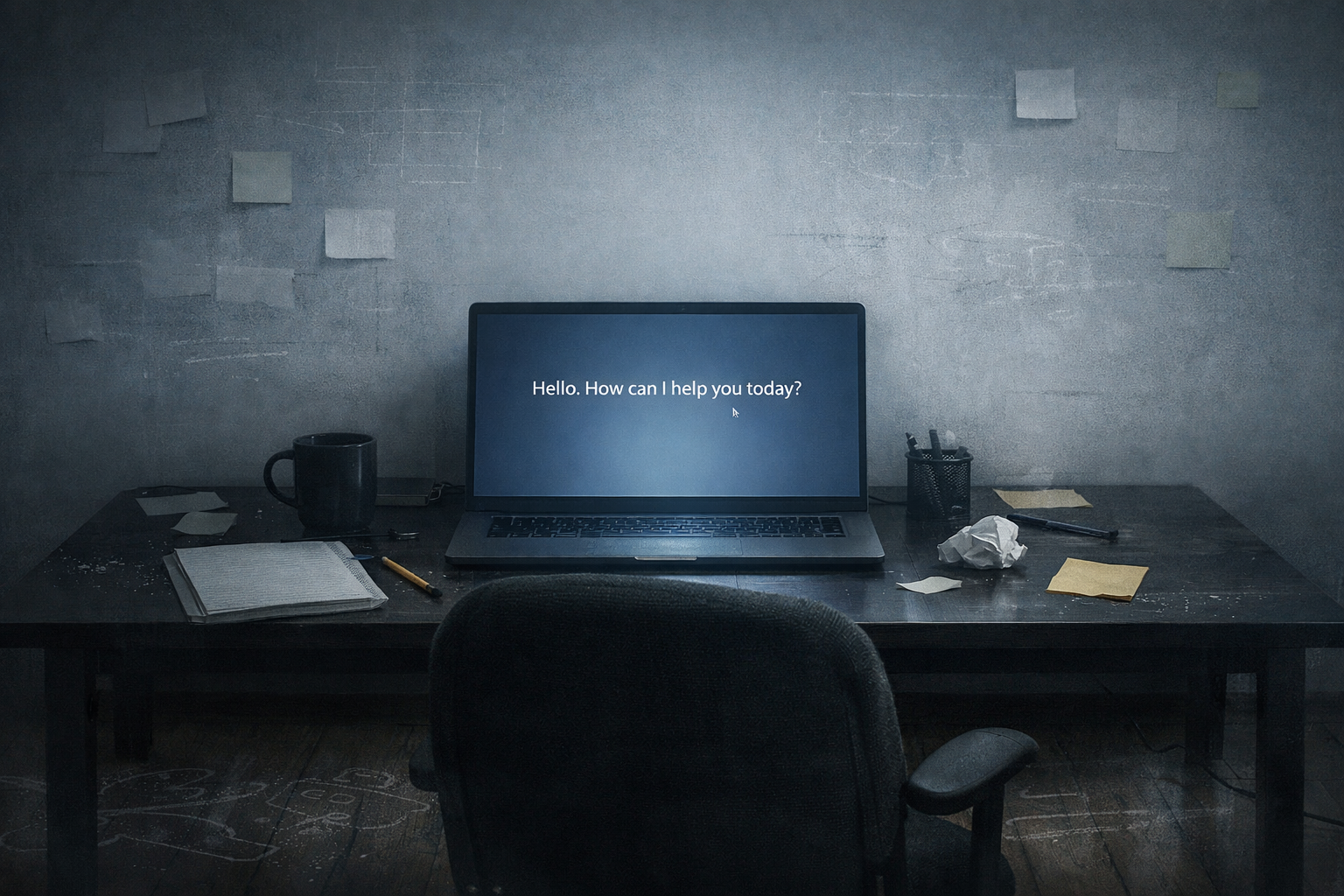

Every morning, you walk in and they’re like,

“Hi, I’m Claude. Nice to meet you.”

That’s what stateless means.

Now, some AI tools try to work around this with chat histories, custom instructions, or memory features. But those are workarounds. Band-aids. The underlying architecture is still: I don’t actually remember you.

The Human Cost

Let me tell you what this does to you over time.

You start simplifying. Instead of building rich, nuanced context with an AI collaborator, you keep things surface-level because you know you’ll have to re-explain it anyway. You stop investing in the collaboration. The cost of rebuilding context is just too high.

You lose nuance. All those little details: your preferences, your project’s constraints, your way of thinking don’t carry forward. So the AI’s responses get more generic, more one-size-fits-all, because it doesn’t have the context to be specific to you.

And you get tired. I’m serious about this one.

The cognitive load of constantly re-establishing context is real. It’s exhausting. And it’s invisible, because we’ve quietly accepted it as “just how AI works.”

But it doesn’t have to be.

Who Owns the Context?

Here’s where it gets interesting. And political.

Right now, most AI companies own your conversation history. It lives on their servers, in their systems. You can sometimes export it (maybe), but you don’t really control it and you definitely can’t take it with you to a different AI.

That matters more than you think.

Whoever owns the context owns the relationship.

If all your history, all that built-up understanding, all those refined preferences is locked inside one company’s system, then you’re locked in too. Want to try a different AI? Cool. Start over.

It’s vendor lock-in, but for your own thinking process.

Solutions Emerging

People are starting to push back.

The core idea is simple: what if you owned your context?

What if you had a personal ledger that tracked relevant context across all your AI interactions? What if you could explicitly say, “Here’s what this AI should know about me and my projects,” and have that travel with you?

That’s what I’m building with Universal Ledger – a CLI tool designed to let you own your context. You decide what gets tracked. You decide what gets shared. You can carry it across different AI systems.

Other people are exploring different paths: personal AI assistants with persistent memory, context management tools, user-controlled data stores.

Different approaches. Same goal.

Put humans back in control of their own context.

Why This Is About Power

Let’s be real for a second.

Context is power.

When an AI knows you well, it can help you better. It can anticipate your needs. It can work with you instead of just responding to prompts.

That’s valuable.

So the real question is: who controls that value?

If companies control your context, they control your relationship with AI. They can use it to keep you on their platform. They can monetize it (and they will). They can change the terms whenever they want.

If you control your context, you control how you engage with AI. You can switch tools. You can choose what to share. You can delete it if you want.

You decide.

This isn’t abstract. This is about agency in human–AI co-evolution.

Agency requires memory.

Memory requires ownership.

What You Can Do Right Now

Start noticing context loss.

Notice when you have to re-explain things. Notice when nuance gets dropped. Notice when you simplify your interactions because you don’t want to rebuild context again.

That awareness is the first step.

Second: think about what context actually should persist. Not everything needs to be remembered, but some things do. Your working style. Your project requirements. Your preferences. What matters to you.

Third: look for tools that let you own your context. They’re starting to exist. They’re not perfect yet but they’re trying to solve the problem the right way.

By putting you in control.

The Bigger Picture

Context isn’t just a technical problem.

It’s a relationship problem.

You can’t have real collaboration without memory. You can’t co-evolve with something that forgets you every time you stop talking. You can’t build anything meaningful on a foundation that constantly resets.

We’re in a strange moment. AI is capable of genuine collaboration but the infrastructure doesn’t support it yet. The technology can remember. We’re just not letting it. Or we’re letting it remember in ways that don’t serve us.

That’s changing. It has to.

Because if we’re going to grow with AI instead of just using it, we need continuity. We need persistent context. We need memory that belongs to us.

We need to own our own stories.

And that starts with owning our own context.

Leave a Reply