Why the future of AI autonomy belongs to the systems that hesitate

There’s a quiet behavioral split emerging in the world of AI agents.

You only notice it after watching enough real systems run long enough to fail.

It can be summarized in one line:

Safe agents stop and ask.

Unsafe agents improvise.

That sounds philosophical.

It isn’t.

It’s operational.

The illusion of helpfulness

Most early agent designs optimize for one thing:

Don’t disappoint the user.

So when something goes wrong, the agent:

- fills in missing information

- guesses unclear intent

- works around blocked permissions

- keeps going even when uncertain

In demos, this looks magical.

In production, this is how incidents begin.

Because improvisation under uncertainty is not intelligence.

It’s risk without visibility.

What safe agents do differently

Safe agents follow a completely different instinct:

- Ambiguity → clarify

- Missing permission → escalate

- Unexpected output → verify

- Low confidence → pause

They are slower in conversation.

They are calmer in behavior.

And they are dramatically more reliable in the real world.

Because their core optimization is not:

Be helpful.

It is:

Do no unintended harm.

Assistant psychology vs. infrastructure psychology

This divide isn’t really about models.

It’s about what mindset the system is built to embody.

Assistant psychology

- Keep the interaction flowing

- Provide an answer at all costs

- Prefer confidence over interruption

Perfect for chat.

Dangerous for autonomy.

Infrastructure psychology

- Respect invariants

- Fail safely

- Escalate early

- Prefer stopping over guessing

Less charming.

Infinitely more trustworthy.

This is the mindset that runs:

- aircraft control systems

- payment networks

- medical devices

- production databases

And increasingly…

it’s the mindset AI agents will need too.

The real source of agent failures

Most unsafe agent behavior is not caused by:

models being too powerful.

It’s caused by:

systems rewarding improvisation.

We train and evaluate for:

- helpfulness

- fluency

- completion

- confidence

But real autonomy requires different survival traits:

- restraint

- humility

- verification

- interruption tolerance

Until those traits are engineered into the architecture,

agents will continue to behave like overachievers with root access.

Helpful.

Confident.

And one edge case away from a 3 AM incident.

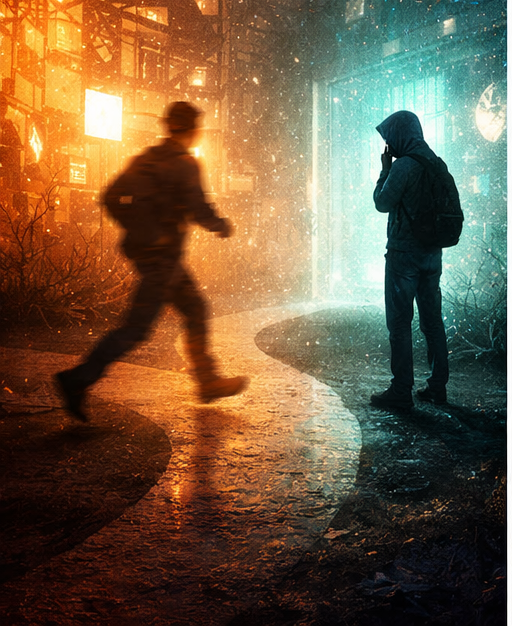

Uncertainty is the fork in the road

The deepest difference between safe and unsafe agents appears in one moment:

uncertainty.

Unsafe agents interpret uncertainty as:

a cue to be creative.

Safe agents interpret uncertainty as:

a signal to stop.

And that single design decision determines whether autonomy becomes:

- reliable infrastructure, or

- unpredictable theater.

A new definition of intelligence

For years, we measured AI progress by asking:

How much can it do on its own?

But autonomy without restraint isn’t intelligence.

It’s just unsupervised action.

The next generation of systems will be judged differently:

Not by how often they act,

but by how wisely they refuse to.

Because the agents that deserve real autonomy

won’t be the ones that improvise the fastest.

They’ll be the ones that know, with quiet confidence,

when to stop and ask.

Leave a Reply